While there are many great tutorials to integrate Java and Python applications into Kafka, PHP is often left out. However, by offering scalability, high performance and high availability, Kafka is a very promising data infrastructure for combining legacy applications (such as PHP monoliths) with modern microservices.

This article shows you how to setup a Kafka-PHP development environment from scratch using Docker and how to send your first message from a PHP application to a Kafka topic.

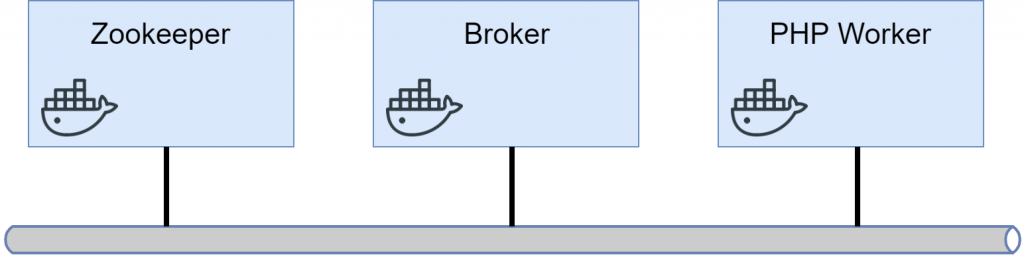

Architecture Overview

For this simple scenario, we will create three Docker containers: one running the zookeeper, one broker and one for the PHP message producer (call that one “PHP Worker”).

When everything is up and running, we want the PHP worker to be able to send messages to a Kafka topic by connecting to the Broker.

Docker Setup

For this quick development setup, we will deploy Kafka as two Docker containers: (1) a zookeeper instance and (2) a single broker.

First, we need to create a new Docker network that will be used by the containers to communicate:

docker network create kafka

Then we spawn the zookeeper container. It is based on the official image published by Confluent (the commercial company founded by the creators of Apache Kafka).

docker run --name=zookeeper --net=kafka -d -e ZOOKEEPER_CLIENT_PORT=2181 confluentinc/cp-zookeeper

The zookeeper instance acts as a distributed configuration management and guarantees that the individual Kafka brokers interact with each other in an ordered way (although we only use a single broker here for simplicity, a production environment would use multiple ones). Its responsibilities include [1]:

- Controller election (important for monitoring broker health and activating replicas in case of failures)

- Topic configuration (managing partitions, replica locations, leader election, …)

- Access control

- Cluster membership

Next, we will launch a single Kafka broker and initialize it with the zookeeper instance’s server name and port:

docker run --net=kafka -d -p 9092:9092 --name=kafka -e KAFKA_ZOOKEEPER_CONNECT=zookeeper:2181 -e KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://kafka:9092 -e KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR=1 confluentinc/cp-kafka

Let’s revisit the parameters:

--net=kafkainstructs the Docker instance to use the previously created “kafka” Docker network-p 9092:9092exposes the broker’s port and allows us to reach Kafka on our localhost-e KAFKA_ZOOKEEPER_CONNECT=zookeeper:2181configures the host name and port of the previously spawned zookeeper instance (notice that “zookeeper” is used directly as a host name because it can be resolved by the Docker name service)-e KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://kafka:9092sets the listener metadata that is passed back to Kafka clients as a tuple of (protocol, host, port). We use the plaintext protocol and use the container’s own host name (“kafka”) and the default port 9092.-

-e KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR=1disables replication for topics (warning: this should not be used in a production setup).

Creating a Topic

Once both instances are up and running, we can create our first queue. First we SSH into the broker instance:

docker exec -it <Container ID> /bin/bash

Next, we can use the following command to create a new topic named “helloworld” without replication and a single partition:

/usr/bin/kafka-topics --zookeeper zookeeper:2181 --create --topic helloworld --replication-factor 1 --partitions 1

Again, we can use “zookeeper” as the host name for the zookeeper instance since both Docker instances are located in the same network.

To verify that the topic has been created successfully, run the following list command:

> /usr/bin/kafka-topics --zookeeper zookeeper:2181 --list __confluent.support.metrics helloworld

Introducing a PHP Producer

To add messages to our newly created Kafka topic with a PHP app, we will use the Enqueue library [2]. We can easily include the library using the following Composer import:

composer require enqueue/rdkafka

As a proof of concept, we will add a “Hello World” message to the previously created helloworld topic.

First, we define a new Dockerfile for our PHP app. Since we need the librdkafka PHP extension to use the Enqueue library, we build it from scratch and enable it. The below Dockerfile is based on the one from Jérôme Gamez [3].

FROM php:7.2-cli

COPY . /usr/src/myapp

WORKDIR /usr/src/myapp

CMD [ "php", "./app.php" ]

ENV LIBRDKAFKA_VERSION v0.9.5

ENV BUILD_DEPS \

build-essential \

git \

libsasl2-dev \

libssl-dev \

python-minimal \

zlib1g-dev

RUN apt-get update \

&& apt-get install -y --no-install-recommends ${BUILD_DEPS} \

&& cd /tmp \

&& git clone \

--branch ${LIBRDKAFKA_VERSION} \

--depth 1 \

https://github.com/edenhill/librdkafka.git \

&& cd librdkafka \

&& ./configure \

&& make \

&& make install \

&& pecl install rdkafka \

&& docker-php-ext-enable rdkafka \

&& rm -rf /tmp/librdkafka \

&& apt-get purge \

-y --auto-remove \

-o APT::AutoRemove::RecommendsImportant=false \

${BUILD_DEPS}

Basically, we use a PHP 7.2 image, copy our code (including the vendor directory containing the Enqueue library), install the tools necessary for building librdkafka and we finally build and enable the library.

Now we add the following app.php containing our application code:

<?php

require __DIR__ . '/vendor/autoload.php';

use Enqueue\RdKafka\RdKafkaConnectionFactory;

$connectionFactory = new RdKafkaConnectionFactory([

'global' => [

'group.id' => uniqid('', true),

'metadata.broker.list' => 'kafka:9092',

'enable.auto.commit' => 'true',

],

'topic' => [

'auto.offset.reset' => 'beginning',

],

]);

$context = $connectionFactory->createContext();

$message = $context->createMessage('Hello world!');

$fooTopic = $context->createTopic('helloworld');

$context->createProducer()->send($fooTopic, $message);

echo 'A test message has been sent to the topic "helloworld".' . PHP_EOL;

This will autoload the Enqueue library, create a connection to the broker instance and send a test message.

To verify that our application is actually sending the message, we can use Kafka’s console consumer from within the broker instance (again SSHing into the container):

/usr/bin/kafka-console-consumer --bootstrap-server kafka:9092 --topic helloworld

While keeping the console with the consumer process open, we can now build the PHP app’s Docker image and spawn a container in a different console:

docker build -t phpworker . docker run --net=kafka -d --name=phpworker phpworker

If everything worked out, you should see the test message in the console consumer:

{"body":"Hello world!","properties":[],"headers":[]}

Wrap up

While this demo application does not yet perform useful work, it shows how a legacy application can be integrated into a modern message-based infrastructure.

Imagine you have a monolith where consumers can order services and will later receive a PDF invoice. In order to extract the invoice generation process into its own microservice, the monolith can be adapted such that each order creates a message in an “orders” topic (as shown in this article). The new invoice generation service can then listen for new messages on that topic and create invoices once they arrive. Since the availability of the invoice generation service does not directly impact the remaining monolith, it can be developed and deployed independently, resulting in a clean and decoupled architecture.

Links

[1] https://www.cloudkarafka.com/blog/2018-07-04-cloudkarafka_what_is_zookeeper.html

[2] https://github.com/php-enqueue/enqueue-dev

[3] https://medium.com/@jeromegamez/adding-kafka-support-to-php-on-docker-56a3881043c1

Leave a Reply